Trick Vulkan API with the Vulkan API (Mirage’s Multisampled Depth)

Back again, and that is the postponed post i promised!!! It’s been a draft already for few months!!

Today’s post as you already have an idea from a previous one, it will be all VK. I want to discuss a problem i got while working on Mirage, and how the API limitations and rules led me to trick the API with it’s own rules to get what was not possible, or in another meaning to do something was stripped out of the API to prevent doing it. There are defiantly more and more new stuff in Mirage, but i found this one worth documenting while the entire story and investigation still on the top of my head.

The Backstory..

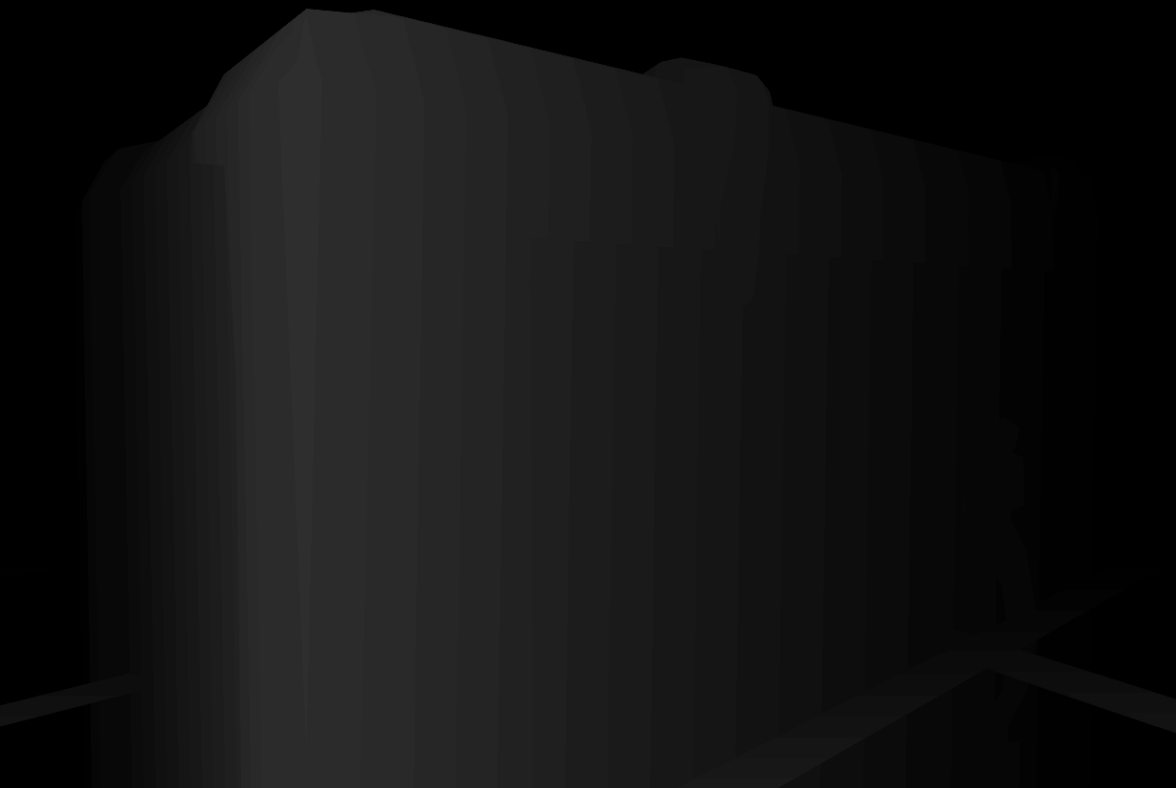

Few months ago, i wanted to add new more and more visualization modes (viewmodes) to Mirage, in order to help me in debugging issues if possible without taking snapshots to dig-deep in NSight or RenderDoc. And one of those things that i needed to support was the Depth image.

I decided to prioritize Depth visualization over other many debugging features (let’s say such as skeleton hierarchy visualizing, shaders complexity visualizing, or even the renderdoc integration itself), because being able to display and manipulate the depth image (which is in VK_FORMAT_D32_SFLOAT_S8_UINT format) as the normal image (let’s say as color image or as texture loaded from desk, which usually in Mirage are in VK_FORMAT_B8G8R8A8_UNORM format) will be a win win. As by doing so, it will be easy (and fun) later on to use that depth image to do some fancy effects such as Fog or Depth of Field (already in use, let’s not spoil thing right now!!! I’ve a plan to discuss those in a future post), or AA,..etc. . In fact, depth image is really important to retain in none-unique or limited-access format, not only for the known rendering techniques that i know (and the ones I don’t know) about, but also for any investigations for new techniques (have some already in mind), having depth as separate accessible resource to read at anytime by any shader, will help in testing and trying out new grounds.

The issue!

So, now where is the issue? And why the depth needed first place to be stored as four 8-bit channels image format? And if it is needed to be visualized, why not using it as D32 format as it is ?

The issue simply is in the MSAA, and in my feature requirement (& I’m usually serious about my features regardless how much time it will cost) to visualize the depth as a true depth visualization to the final image. So, if my final image is multisampled (MSAA was enabled at it’s frame start), i needed my depth to be multisampled/anti-aliased as well. And if not, i needed me depth to be as jaggy as expected, in order to be a true representation of my final render. And this is basically the main source for the issue. One of my early concerns was, the visual issues that can show up in a depth based post-processor/shader at my final image that is multisampled, only because my depth wasn’t multisampled.

With Vulkan, it might not be an issue at all to visualize the depth at it’s default state, as it can be used as a renderpass attachment by passing it to the SubpassDescription as the pDepthStencilAttachment member, and then display that depth image on the screen on a single quad. The core issue here that, stnecil can’t be resolved (MSAA) or in another notes (which I’m not quite sure about) it was resolvable attachment at an early stages of the Vulkan API, and that been stripped out (again, not sure about this note that i read somewhere).

At the other hand, there is an actual (well, by definition and explanation in the API specs) workflow for depth resolving (same way color get resolved), which is done via pointing the subpass description to a valid VkSubpassDescriptionDepthStencilResolve struct. So why not using that?

Well, first thing, it is not supported in my current VK version (1.0) which i’m not planning to change at this point. Secondly, in order to have this VkSubpassDescriptionDepthStencilResolve, I’ll have to use (what I call) the alternative API or the 2nd API as many people refer to. It is not alternative, but this is was my initial perception to it. I usually use structures and functions that have normal names, but it is quite frequent in Vulkan to find multiple version of the same spec that ends with an index number. So in that case, i do use VkSubpassDescription for my subpass creation, but in order to resolve depth via the VkSubpassDescriptionDepthStencilResolve i need to use VkSubpassDescription2. This could sound like a none issue, but as soon as you/I start in replacing those structures with the version that end with the index 2, it will become an endless changes nightmare, as almost everything in Mirage’s code, would need to have that same spec version (at lease the renderpass related code so far, and bits of the instance and logical device), otherwise, will have errors that prevent the renderer from showing up even a black screen (i personally call it surface failure, idk what are the common term for such case nowdays). So for example, if i need my change from VkSubpassDescription to VkSubpassDescription2 to work, i need to change my AttachmentReference (multiple ones) to AttachmentReference2, which have different syntax (well, different amount of members, but i do say syntax in term of my initialization method). So you got the point of what i mean by ” endless changes nightmare”.

Also, it is worth it to mention, that changing to the other spec version is not usually a matter of adding the index to everything, those are different versions, and hence they do have different members per structures, and may be different params per function signature! The entire idea for that, is not clear for me, and for many other people, some would say this is deprecated, others would say it is the new API, others would say it is an in going fix, and others would say it is the previous API and the one without index is always the final version..It is not clear, and i decided not to get into this, already spent two-three days in understanding, and trying to flip Mirage to that “2” version of the specs, but found it is not worth it at the end. If my only reason to flip the entire code upside down is the Depth resolving, then i’ve to find out another way that would work with the current codebase.

From all what i read already, i would like to leave that reddit post in that regard of the different specs, it is good to read, and have some info that might reduce the confusion.

The Solution..

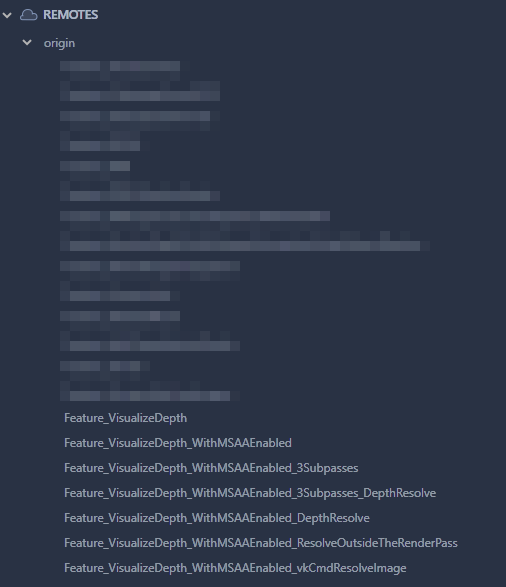

Simply I decided to use the vkCmdResolveImage function to resolve the depth image. But this is not as easy as it sound!

When doing MSAA (resolve the swapchain image for the color), the depth have to get the same sample count as the MSAA color image, which is 8 bit but when we present, we do present the 1 bit final resolved image (no point of presenting 8 bit), but at the same time depth remains 1 bit.

VK’s issue that depth can’t be resolved as part of the renderpass attachments in pResolveAttachments vector (or it used to be at some point). So instead, I’ll be using vkCmdResolveImage (original idea was change format via vkCmdBlitImage as blitting can change the format during the process), to resolve the depth image to a 1 bit image to be able to present it to the shader when it is needed to be displayed on the screen. There is really quite a few ways that can help changing image formats and/or sample count.

There were other solutions, but none worked out. something like working in a dedicated pass to resolve the depth, but didn’t work, as the issue remained the same (samplecount!). Another test was to resolve color after depth is calculated, then we are not worried about formats. But then we get back screen, as the last pass will have not depth!

Many methods testes, each attempt took it’s own branch!

The Result

The end result is pretty satisfying, at all aspects. As i now have a very accurate depth image stored at the back of my head (well at the engine’s back head) that can be utilized at anytime, this final image is a true representation of the final image aliasing situation (as long as the active anti-aliasing method is MSAA, FXAA is a different story). And i can use this image at anytime to visualize the multisampled scene depth.

- It is really important to know the real current limitations of the API and don’t rely on some random old posts, things changing all the time.

- Not everything the API give, is working very efficient, and there are cases where out of the box solutions can be more efficient.

- Don’t hesitate to create the feature in multiple ways, as many ways as you can, and eventually set the best of them to be the main one to use. Can always remove others, or def them as alternative ways. Who knows what could change in the future!

-m